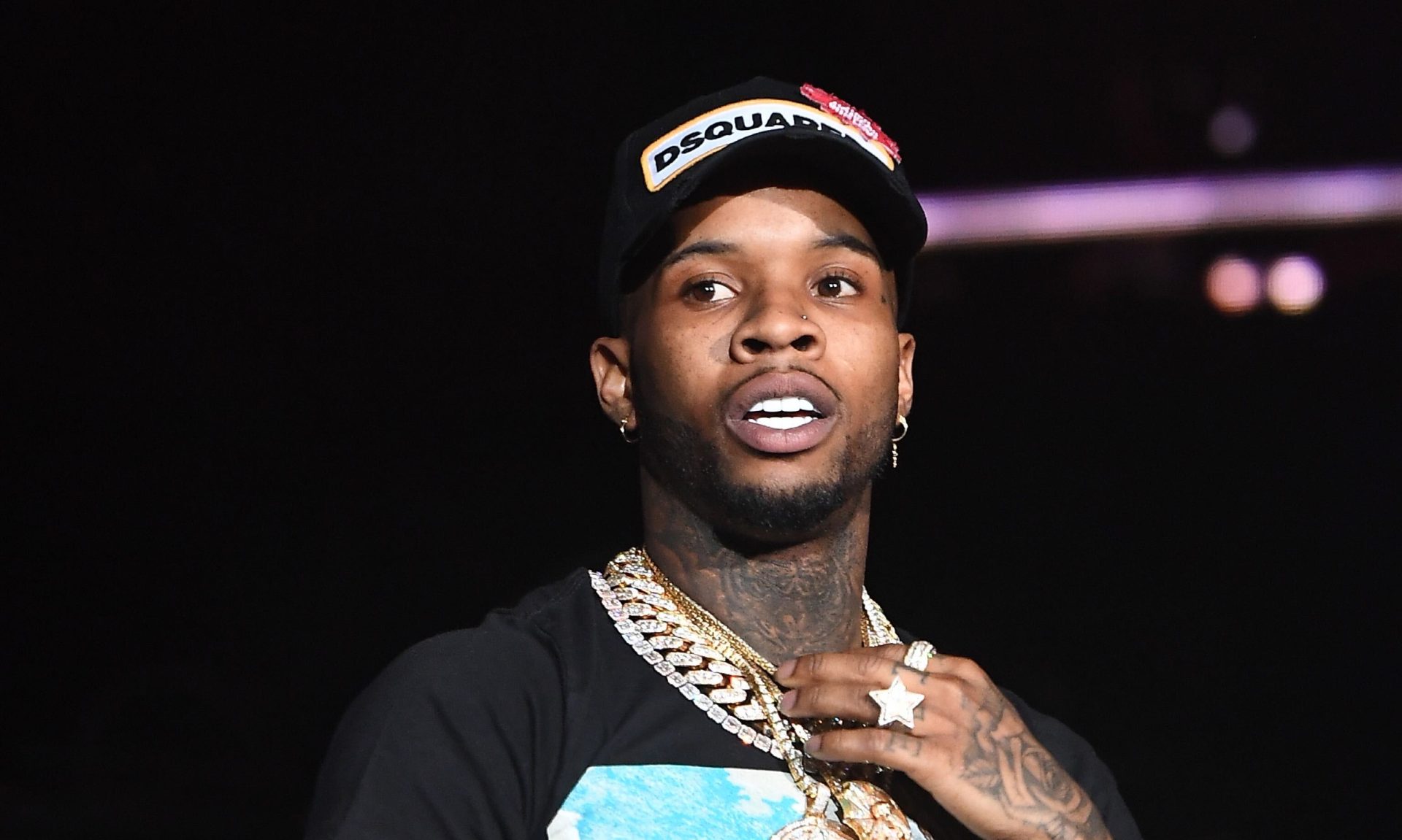

MIT’s mini cheetah robotic has damaged its have personalized greatest (PB) speed, hitting 8.72 mph (14.04 km/h) many thanks to a new product-absolutely free reinforcement mastering process that allows the robot to figure out on its individual the best way to operate and makes it possible for it to adapt to different terrain, devoid of relying on human investigation.

The mini cheetah is just not the swiftest quadruped robot likely close to. In 2012, its larger sized Cheetah sibling achieved a top speed of 28.3 mph (45.5 km/h), but the mini cheetah getting designed by MIT’s Inconceivable AI Lab and the National Science Foundation’s Institute of AI and Basic Interactions (IAIFI) is substantially far more agile and is equipped to study without having even having a move.

In a new movie, the quadruped robot can be noticed crashing into boundaries and recovering, racing by means of obstructions, managing with a single leg out of motion, and adapting to slippery, icy terrain as well as hills of unfastened gravel. This adaptability is thanks to a very simple neural network that can helps make assessments of new circumstances that could put its hardwire underneath significant stress.

MIT

Typically, how a robot moves is managed by a system that makes use of data primarily based on an assessment of how mechanical limbs shift to build models that provide as guides. Having said that, these types are generally inefficient and inadequate mainly because it isn’t achievable to foresee every single contingency.

When a robotic is jogging at top rated pace, it is really working at the boundaries of its hardware, which helps make it incredibly difficult to product, so the robotic has problems adapting swiftly to sudden alterations in its surroundings. To conquer this, instead of analytically made robots, this kind of as Boston Dynamics’ Location, which rely on humans examining the physics of motion and manually configuring the robot’s hardware and software program, the MIT crew has opted for a person that learns by encounter.

In this, the robot learns by demo and mistake without the need of a human in the loop. If the robot has more than enough working experience of different terrains it can be designed to automatically strengthen its conduct. And this encounter would not even need to have to be in the genuine globe. In accordance to the team, using simulations, the Mini-Cheetah can accumulate 100 days’ of experience in 3 several hours while standing continue to.

MIT

“We produced an strategy by which the robot’s conduct increases from simulated expertise, and our approach critically also enables thriving deployment of these learned behaviors in the real globe,” claimed MIT PhD student Gabriel Margolis and IAIFI postdoc Ge Yang. “The instinct behind why the robot’s operating capabilities perform effectively in the true world is: Of all the environments it sees in this simulator, some will teach the robot abilities that are handy in the true globe. When running in the serious entire world, our controller identifies and executes the applicable techniques in genuine-time.”

With these kinds of a method, the researchers claim that it is achievable to scale up the know-how, which the standard paradigm are unable to do commonly.

“A much more realistic way to make a robot with a lot of diverse capabilities is to notify the robotic what to do and permit it determine out the how,” additional Margolis and Yang. “Our method is an example of this. In our lab, we have started to apply this paradigm to other robotic devices, which include palms that can pick up and manipulate quite a few diverse objects.”

The video clip underneath is of the mini cheetah displaying what it truly is figured out.

Mini-Cheetah

Resource: MIT