|

Hear to this post |

https://www.youtube.com/observe?v=z0UHdAI3sXI

Researchers at MIT’s Computer Science and Artificial Intelligence Laboratory have introduced an open-supply simulation motor that can construct photorealistic environments to practice and take a look at autonomous autos.

Training neural networks to generate vehicles autonomously demands a large amount of facts. Significantly of this details can be complicated to safe in the serious entire world employing true autos. Researchers just can’t simply crash a car to train a neural community to not crash a automobile, so they count on simulated environments for this type of knowledge. That is where by simulated training environments, like CSAIL’s VISTA 2. arrives in.

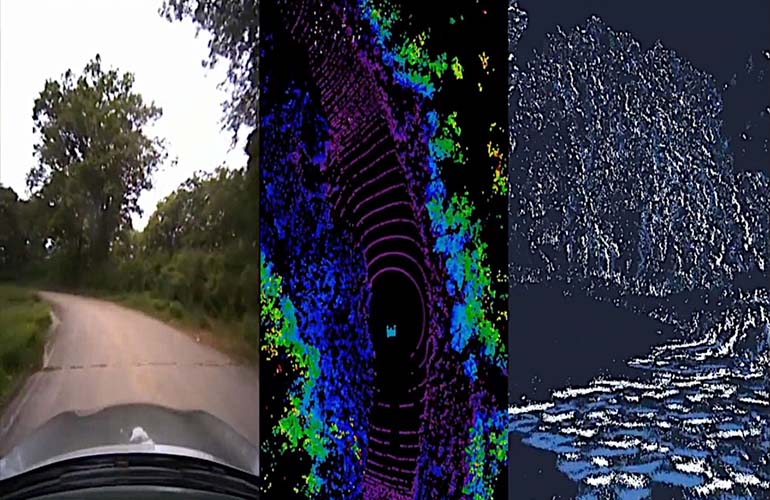

VISTA 2., an up to date variation of the team’s prior model VISTA, is a knowledge-driven simulation surroundings that was photorealistically rendered from serious-earth info. It’s ready to simulate sophisticated sensor styles and interactive eventualities and intersections at scale.

“Today, only providers have application like the type of simulation environments and abilities of VISTA 2., and this application is proprietary. With this release, the investigate neighborhood will have entry to a highly effective new tool for accelerating the investigate and growth of adaptive sturdy command for autonomous driving,” MIT Professor and CSAIL Director Daniela Rus, senior writer on a paper about the analysis, explained.

VISTA 2.0’s photorealistic environment reflects a recent trend in the autonomous vehicle sector. Builders are relocating absent from working with human-built simulation environments and towards utilizing kinds constructed from authentic-entire world information.

These environments are pleasing since they allow for direct transfers to actuality. Nonetheless it can be challenging to synthesize the richness and complexity of all the sensors autonomous cars will need. For instance, to replicate LiDAR in these environments, researchers in essence require to crank out new 3D level clouds with tens of millions of points working with only a sparse check out of the globe.

To get around this, the MIT workforce projected info collected from the automobile into a 3D place coming from the LiDAR knowledge. They then enable a new digital automobile drive close to domestically from wherever that authentic car was, and, with the help of neural networks, projected sensory info again into the frame of view of the new digital automobile.

VISTA 2., can open-resource simulation motor from MIT CSAIL, that can simulate environments for instruction self driving cars. | Source: MIT CSAIL

The team also simulated, in actual-time, event-based mostly cameras, which function at increased speeds than 1000’s of activities per second. With all of these sensors simulated, you are ready to transfer automobiles all over in the simulation, simulate diverse forms of situations, and drop in brand name new vehicles not section of the original info.

“This is a substantial leap in capabilities of knowledge-driven simulation for autonomous motor vehicles, as perfectly as the boost of scale and capability to cope with larger driving complexity,” Alexander Amini, CSAIL PhD pupil and co-lead author on two new papers, alongside one another with fellow PhD scholar Tsun-Hsuan Wang, said. “VISTA 2. demonstrates the potential to simulate sensor facts significantly beyond 2D RGB cameras, but also exceptionally high dimensional 3D lidars with hundreds of thousands of factors, irregularly timed party-primarily based cameras, and even interactive and dynamic scenarios with other motor vehicles as very well.”

MIT’s staff took a whole-scale automobile out to check VISTA 2. in Devens, Massachusetts. The crew saw an immediate transferability of results, with the two failures and successes. Moving forward, CSAIL hopes to enable the neural network to understand and react to gestures from other motorists, like a wave, nod or blinker switch of acknowledgement.

Amini and Wang wrote the paper together with Zhijian Liu, MIT CSAIL PhD university student Igor Gilitschenski, assistant professor in laptop or computer science at the University of Toronto Wilko Schwarting, AI study scientist and MIT CSAIL PhD ’20 Song Han, affiliate professor at MIT’s Section of Electrical Engineering and Laptop or computer Science Sertac Karaman, affiliate professor of aeronautics and astronautics at MIT and Daniela Rus, MIT professor and CSAIL director.