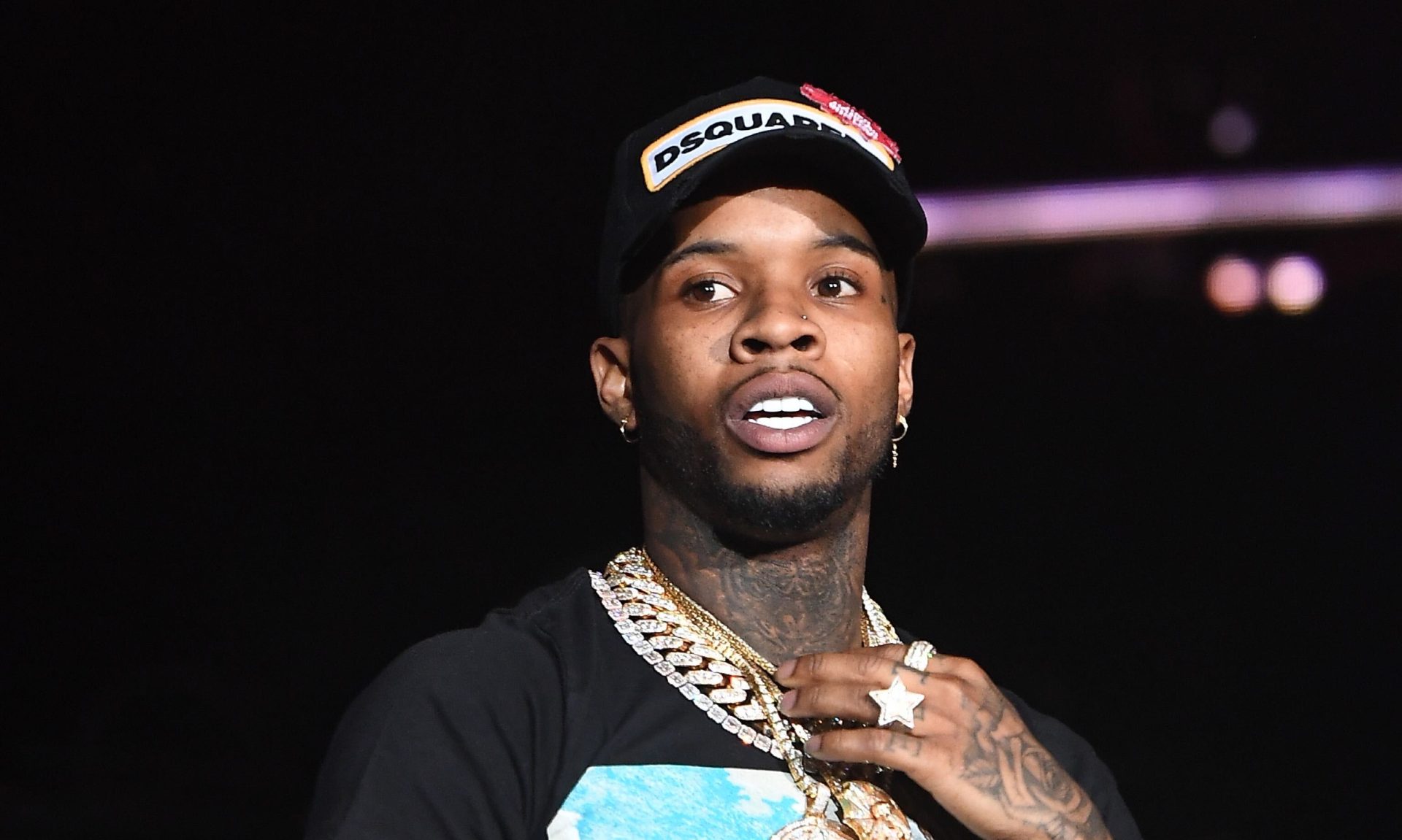

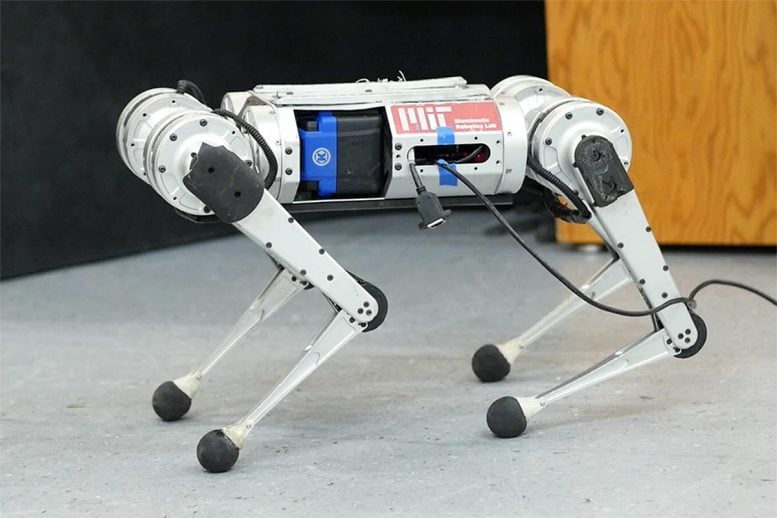

MIT’s mini cheetah, utilizing a product-free reinforcement studying method, broke the record for the quickest run recorded. Credit: Photograph courtesy of MIT CSAIL.

CSAIL experts arrived up with a mastering pipeline for the 4-legged robot that learns to operate entirely by trial and mistake in simulation.

It’s been approximately 23 several years since one particular of the very first robotic animals trotted on the scene, defying classical notions of our cuddly 4-legged buddies. Considering the fact that then, a barrage of the going for walks, dancing, and doorway-opening machines have commanded their presence, a sleek mixture of batteries, sensors, steel, and motors. Lacking from the checklist of cardio activities was one equally loved and loathed by people (dependent on whom you inquire), and which proved marginally trickier for the bots: discovering to run.

Scientists from https://www.youtube.com/enjoy?v=-BqNl3AtPVw

The MIT mini cheetah learns to operate speedier than at any time, working with a finding out pipeline which is solely trial and error in simulation.

Q: Former agile working controllers for the MIT Cheetah 3 and mini cheetah, as nicely as for Boston Dynamics’ robots, are “analytically designed,” relying on human engineers to review the physics of locomotion, formulate productive abstractions, and employ a specialized hierarchy of controllers to make the robot equilibrium and operate. You use a “learn-by-encounter model” for functioning instead of programming it. Why?

A: Programming how a robotic ought to act in just about every feasible scenario is merely very difficult. The system is cumbersome, simply because if a robot were to fall short on a unique terrain, a human engineer would have to have to identify the induce of failure and manually adapt the robot controller, and this course of action can demand substantial human time. Mastering by trial and error gets rid of the need to have for a human to specify exactly how the robotic should behave in each and every scenario. This would do the job if: (1) the robotic can knowledge an extremely extensive vary of terrains and (2) the robotic can quickly increase its conduct with working experience.

Thanks to contemporary simulation applications, our robot can accumulate 100 days’ truly worth of practical experience on various terrains in just a few hours of real time. We produced an tactic by which the robot’s habits enhances from simulated encounter, and our approach critically also permits thriving deployment of individuals discovered behaviors in the real earth. The intuition behind why the robot’s managing competencies work perfectly in the genuine earth is: Of all the environments it sees in this simulator, some will teach the robotic capabilities that are practical in the actual entire world. When functioning in the genuine entire world, our controller identifies and executes the related capabilities in serious-time.

Q: Can this solution be scaled outside of the mini cheetah? What excites you about its upcoming purposes?

A: At the coronary heart of synthetic intelligence exploration is the trade-off amongst what the human desires to develop in (character) and what the device can master on its individual (nurture). The classic paradigm in robotics is that humans notify the robot both equally what job to do and how to do it. The dilemma is that these types of a framework is not scalable, due to the fact it would just take enormous human engineering hard work to manually software a robotic with the abilities to run in numerous diverse environments. A more functional way to construct a robot with a lot of various expertise is to tell the robotic what to do and let it determine out the how. Our technique is an instance of this. In our lab, we have started to utilize this paradigm to other robotic techniques, together with fingers that can pick up and manipulate numerous different objects.

This do the job was supported by the (function(d, s, id) var js, fjs = d.getElementsByTagName(s)[0] if (d.getElementById(id)) return js = d.createElement(s) js.id = id js.src = "//connect.facebook.web/en_US/sdk.js#xfbml=1&edition=v2.6" fjs.parentNode.insertBefore(js, fjs) (doc, 'script', 'facebook-jssdk'))