As synthetic intelligence and deep discovering tactics come to be significantly state-of-the-art, engineers will want to build components that can run their computations equally reliably and competently. Neuromorphic computing components, which is motivated by the construction and biology of the human brain, could be notably promising for supporting the procedure of innovative deep neural networks (DNNs).

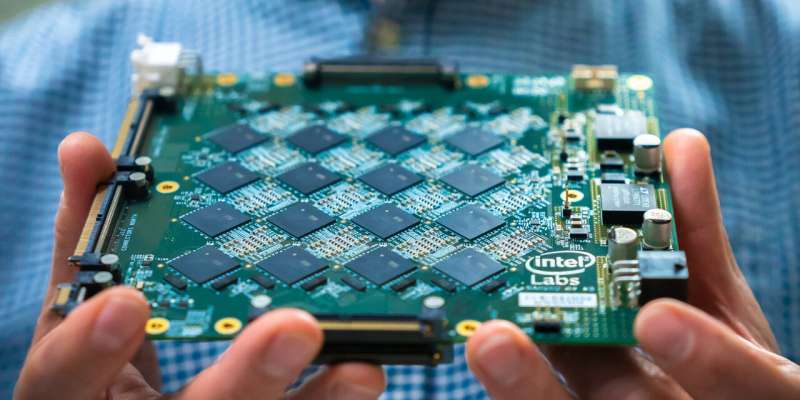

Scientists at Graz College of Engineering and Intel have not long ago shown the big prospective of neuromorphic computing hardware for managing DNNs in an experimental location. Their paper, published in Character Machine Intelligence and funded by the Human Mind Job (HBP), demonstrates that neuromorphic computing hardware could operate massive DNNs 4 to 16 moments a lot more effectively than standard (i.e., non-brain motivated) computing hardware.

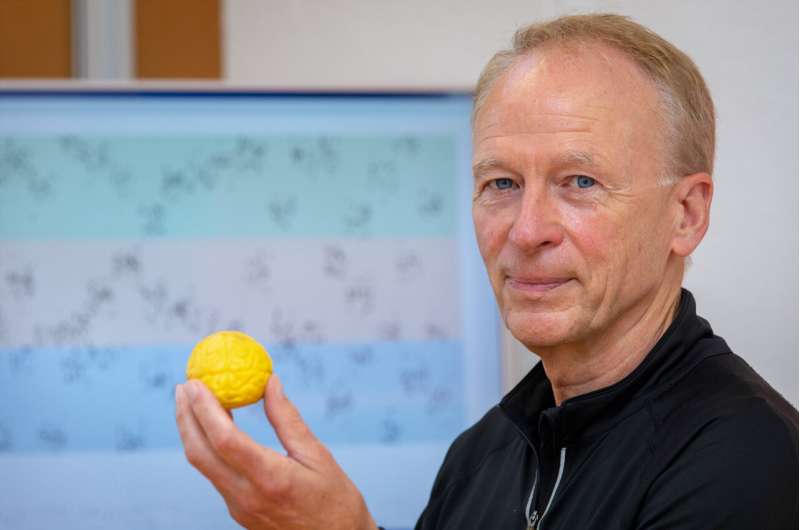

“We have shown that a massive course of DNNs, people that approach temporally prolonged inputs these types of as for example sentences, can be applied considerably far more electricity-efficiently if one particular solves the same difficulties on neuromorphic components with mind-inspired neurons and neural community architectures,” Wolfgang Maass, just one of the scientists who carried out the review, explained to TechXplore. “Furthermore, the DNNs that we regarded are vital for increased stage cognitive function, this sort of as getting relations in between sentences in a story and answering thoughts about its articles.”

In their tests, Maass and his colleagues evaluated the vitality-efficiency of a big neural community jogging on a neuromorphic computing chip designed by Intel. This DNN was specifically made to procedure big letter or digit sequences, these types of as sentences.

The researchers measured the strength consumption of the Intel neuromorphic chip and a regular pc chip when operating this same DNN and then as opposed their performances. Apparently, the scientists found that adapting the neuron models contained in computer system hardware so that they resembled neurons in the brain enabled new functional properties of the DNN, improving its strength-performance.

“Increased power efficiency of neuromorphic hardware has typically been conjectured, but it was tough to display for demanding AI tasks,” Maass stated. “The cause is that if just one replaces the artificial neuron designs that are used by DNNs in AI, which are activated 10s of 1000’s of situations and extra for each next, with far more brain-like ‘lazy’ and as a result extra energy-efficient spiking neurons that resemble those people in the brain, just one usually experienced to make the spiking neurons hyperactive, considerably extra than neurons in the brain (the place an normal neuron emits only a few periods for every 2nd a sign). These hyperactive neurons, even so, eaten also much electrical power.”

Many neurons in the mind need an prolonged resting interval just after remaining active for a when. Previous reports aimed at replicating organic neural dynamics in components often achieved disappointing results thanks to the hyperactivity of the artificial neurons, which eaten too significantly electricity when working especially large and sophisticated DNNs.

In their experiments, Maass and his colleagues showed that the tendency of quite a few organic neurons to relaxation right after spiking could be replicated in neuromorphic components and used as a “computational trick” to fix time collection processing jobs far more efficiently. In these tasks, new details requirements to be combined with information and facts collected in the current previous (e.g., sentences from a story that the network processed beforehand).

“We confirmed that the network just requirements to test which neurons are at this time most fatigued, i.e., reluctant to fire, since these are the kinds that had been lively in the the latest previous,” Maass stated. “Utilizing this system, a clever network can reconstruct based on what facts was not long ago processed. Therefore, ‘laziness’ can have positive aspects in computing.”

The researchers shown that when functioning the similar DNN, Intel’s neuromorphic computing chip eaten 4 to 16 occasions significantly less strength than a standard chip. In addition, they outlined the possibility of leveraging the synthetic neurons’ absence of activity just after they spike, to considerably make improvements to the hardware’s effectiveness on time collection processing tasks.

In the long term, the Intel chip and the approach proposed by Maass and his colleagues could help to improve the effectiveness of neuromorphic computing hardware in operating significant and sophisticated DNNs. In their potential work, the workforce would also like to devise additional bio-motivated techniques to enhance the performance of neuromorphic chips, as present hardware only captures a small portion of the elaborate dynamics and features of the human brain.

“For case in point, human brains can learn from observing a scene or hearing a sentence just after, whilst DNNs in AI involve abnormal teaching on zillions of illustrations,” Maass included. “Just one trick that the brain makes use of for brief mastering is to use distinct understanding approaches in diverse sections of the brain, while DNNs usually use just a person. In my subsequent studies, I would like to allow neuromorphic hardware to produce a ‘personal’ memory centered on its past ‘experiences,’ just like a human would, and use this unique expertise to make far better selections.”

Demonstrating significant power discounts utilizing neuromorphic components

Arjun Rao et al, A Prolonged Shorter-Phrase Memory for AI Purposes in Spike-centered Neuromorphic Components, Mother nature Machine Intelligence (2022). DOI: 10.1038/s42256-022-00480-w

© 2022 Science X Network

Citation:

A neuromorphic computing architecture that can run some deep neural networks extra competently (2022, June 14)

retrieved 15 June 2022

from https://techxplore.com/news/2022-06-neuromorphic-architecture-deep-neural-networks.html

This document is issue to copyright. Apart from any honest working for the objective of personal analyze or research, no

component may well be reproduced without the need of the prepared authorization. The articles is delivered for information and facts functions only.